Besides lamenting life’s romantic complexities, The Clash sums up a customer’s decision whether to renew their software subscription remarkably well. And just like in the song, science shows relationship history matters when making the choice.

Besides lamenting life’s romantic complexities, The Clash sums up a customer’s decision whether to renew their software subscription remarkably well. And just like in the song, science shows relationship history matters when making the choice.

It’s all in the β

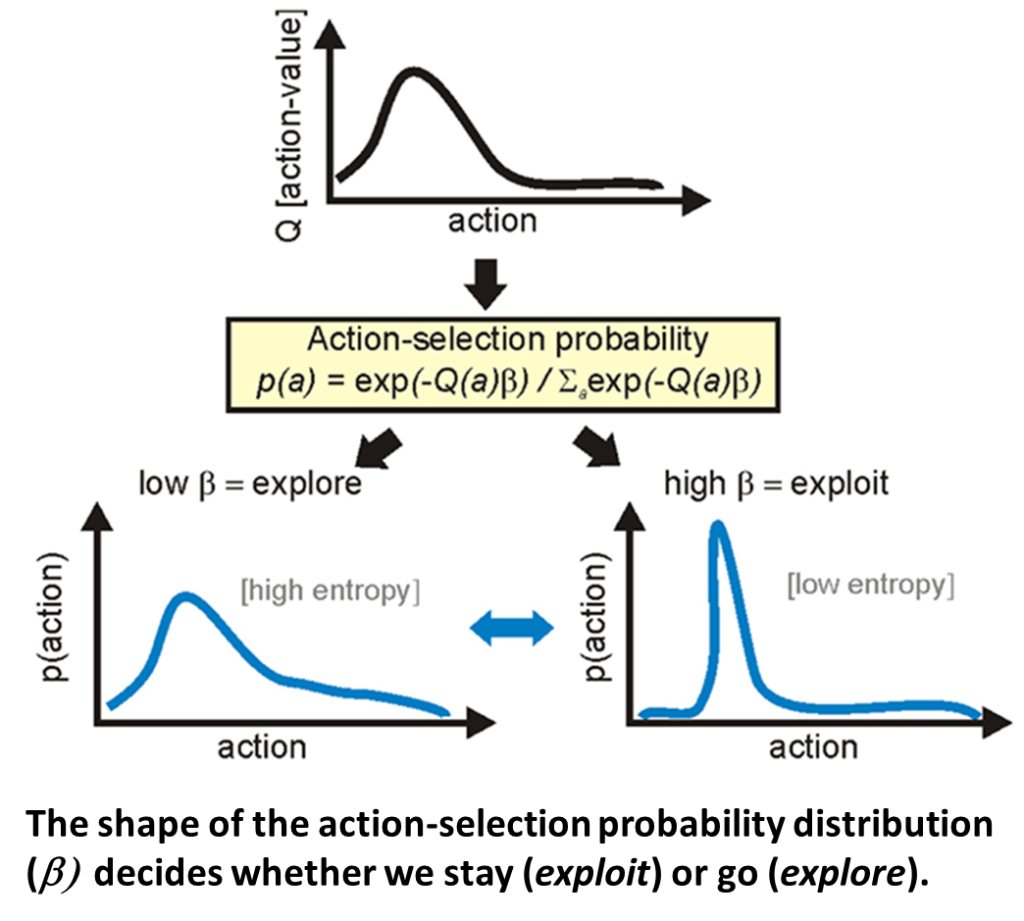

Humans occasionally face what’s called the exploration-exploitation tradeoff—we must choose between actions with well-known outcomes or actions with unsure, but potentially better outcomes. In other words, should you stick with what you have (exploit) or switch to something new (explore)? It’s the essential question when deciding whether to renew a software contract.

How does the brain physically make this choice?

Research suggests the mind uses a simple mathematical relationship when deciding to fish or cut bait.[1] During the learning process, the brain automatically calculates the value derived from a set of actions. The association is encoded and stored as a probability distribution function. The shape of this function, or β, then determines what we decide. Experiments show that if the distribution’s shape is low and flat, β is low and we explore elsewhere for greater rewards. But is the shape is tall and narrow, β is high and we stay put, exploiting the benefits we currently receive.

This decision probability distribution directs the basal ganglia, an area of the brain responsible for selecting actions. We make decisions using a complex web of neural networks, and while we may sometimes have an internal debate on what to do, our basal ganglia are the ultimate judge. This region compares the relative strengths of decision alternatives and makes the final call when a clear winner emerges.

Brain tonic

If it all boils down to β, then what alters it?

Evidence shows that dopamine is the catalyst for the stay-versus-go decision. Scientists have long known the neurotransmitter signals rewards and punishments during the learning process. When something is good for us, the brain delivers a shot of dopamine. When it’s bad for us, the brain shuts it off. We then subconsciously associate the emotional results to the actions that preceded them, storing the learned action-outcome information for future reference.

In the case of the exploration-exploitation tradeoff, neuroscientists have shown that tonic (or background) dopamine levels affect β ‘s shape. People made very different choices after scientists artificially altered dopamine concentrations in their bloodstreams. Test subjects stayed put when dopamine levels were high and looked elsewhere when levels were low.

Just how tonic dopamine in exploration-exploitation tradeoff decisions relates to phasic (or transient) dopamine in short-term learning is a subject of scientific debate.[2] However, commonly used reinforcement learning models include a gain parameter that scales the effect of learned values on biases in action choice, and research shows both tonic and phasic dopamine play a key role.[3]

What it means for the customer experience

If the end game is for software companies is to ensure customers renew their software agreements, then boosting their customers’ β should be of paramount concern. Of course, one option is to strap down the customer, inject them with a hyperdopaminergic substance and then hand them a pen. A better option, however, is to ensure customers enjoy a long history of rewarding experiences. This boosts levels of dopamine in the short-term and the long-term, swaying the decision to stay rather than go.

Sources:

[1] Sutton, R. S., and Barto, A. G. (1998). Reinforcement Learning: An Introduction. Cambridge, MA: MIT Press.

[2] Humphries, M. D., and Redgrave, P. (2010). Midbrain dopamine neurons encode an exploration/exploitation trade-off, in 2010 Neuroscience Meeting Planner, Program No. 916.3 (San Diego: Society for Neuroscience).

[3] Beeler, J., Daw, N., Frazier, C. and Zhuang, X. (2010) Tonic dopamine modulates exploitation of reward learning. Behavioral Neuroscience, 04 November 2010